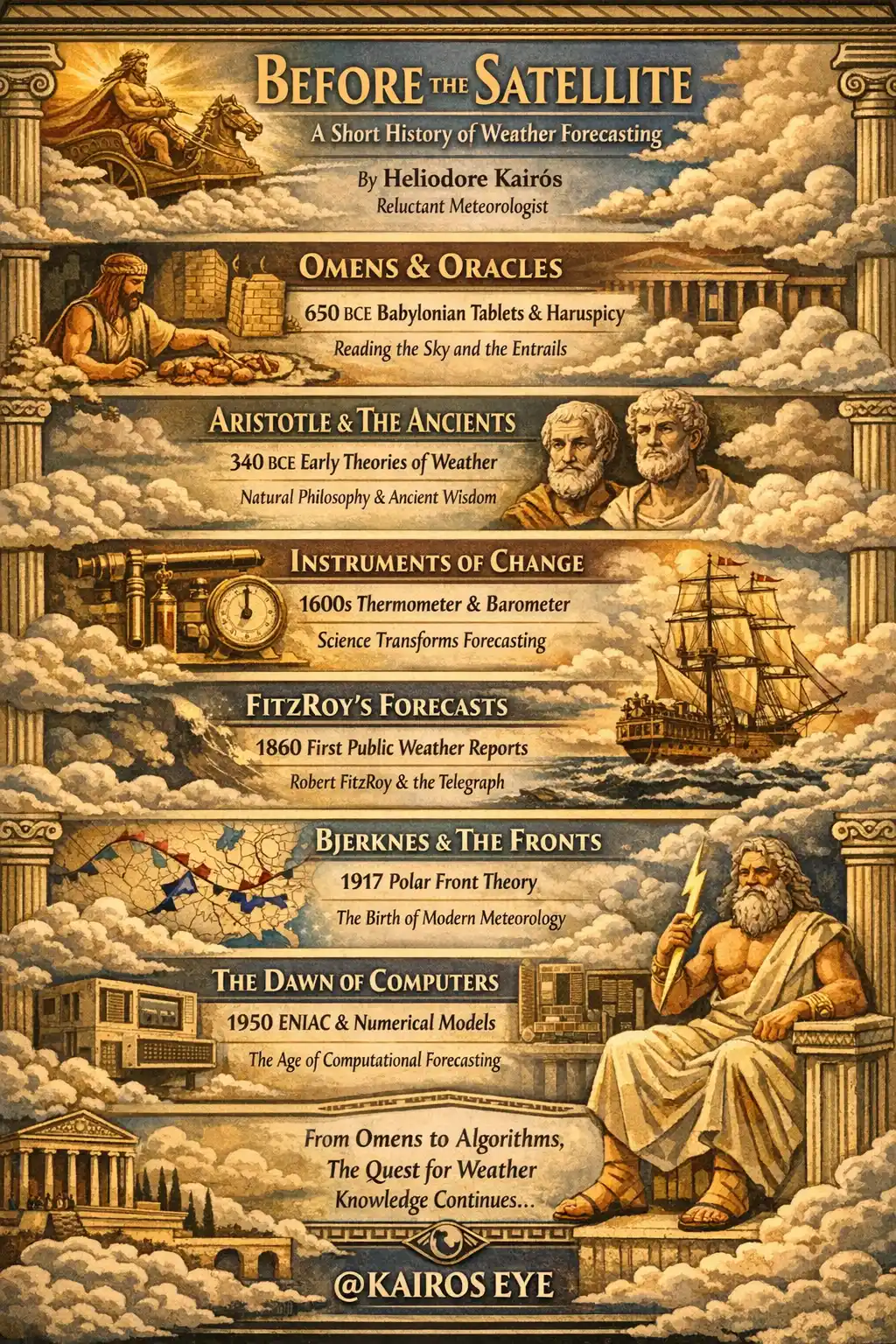

Before the Satellite: A Short History of Weather Forecasting

I should say at the outset that predicting the weather has always been a fundamentally absurd enterprise. The atmosphere is a chaotic fluid system with more variables than any civilisation has ever been equipped to measure, and yet we have been trying to guess what it will do next for roughly four thousand years. The fact that we now occasionally succeed is less a testament to human genius than to human stubbornness, which may, in fact, be the same thing.

What follows is a selective history. Not exhaustive, because exhaustive histories of meteorology tend to be written by academics who have never stood outside in a storm and wondered whether the gods were personally upset with them. I have stood outside in many storms. I have wondered this. The Weathered Pages contain evidence.

Entrails, Omens, and the Babylonian Method

The earliest weather forecasts on record come from Babylon, roughly 650 BCE, inscribed on clay tablets in the cuneiform script that the Mesopotamians used for everything from accounting to theology. The tablet collection known as the Enūma Anu Enlil contains celestial and atmospheric omens: if the sky looks like this, then that will happen. If the sun is surrounded by a halo, rain will come. If the wind blows from the south at dawn, the harvest will be good.

Some of these were surprisingly accurate. The halo observation, for instance, is real atmospheric physics: solar halos form when light refracts through hexagonal ice crystals in cirrostratus clouds, which typically precede warm fronts and their associated precipitation. The Babylonians did not know about hexagonal ice crystals. They did not need to. They had been watching the sky for centuries, and centuries of observation produce patterns, even without a theory to explain them.

Other methods were less rigorous. Haruspicy, the reading of animal entrails (particularly sheep livers), was a standard forecasting technique across the ancient Near East. A priest would sacrifice an animal, examine its liver for irregularities, and issue predictions accordingly. I will not pretend to understand the meteorological logic, though I note that modern television forecasters also examine something they do not fully understand and issue predictions with absolute confidence.

The Greeks, naturally, had opinions. Aristotle's Meteorologica, written around 340 BCE, is the first systematic attempt to explain atmospheric phenomena through natural philosophy rather than divine intervention. Aristotle proposed that weather was caused by two types of "exhalation" rising from the earth: a moist exhalation that produced rain and clouds, and a dry exhalation that produced wind and earthquakes. He was wrong about nearly everything in the specifics, but the ambition was revolutionary. He was saying: this is not the work of Zeus. This is physics. Badly understood physics, but physics nonetheless.

Theophrastus, Aristotle's student and successor at the Lyceum, took a more practical approach. His De Signis Tempestatum ("On Weather Signs") is essentially a manual of folk forecasting: red sky at morning, behaviour of animals before storms, the smell of ditches before rain. It remained a standard reference for nearly two thousand years, which is either a testament to its quality or an indictment of the pace of progress. Possibly both.

The Long Silence, and Then the Instruments

For roughly eighteen centuries after Theophrastus, weather forecasting remained essentially unchanged. Farmers watched the sky. Sailors watched the sea. Everyone watched the animals. There was no theory, no measurement, and no particular expectation that things would improve.

Then, in the span of about two hundred years, everything changed.

Galileo Galilei (or possibly his friend Santorio Santorio; the attribution is disputed) invented the thermoscope around 1603, a primitive device that detected temperature changes without actually measuring them. Evangelista Torricelli invented the mercury barometer in 1643, proving that the atmosphere had weight and that this weight fluctuated. The first reliable thermometers, with sealed tubes and standardised scales, appeared in the early eighteenth century. Daniel Gabriel Fahrenheit produced his mercury thermometer in 1714. Anders Celsius proposed his scale in 1742.

Suddenly, weather was no longer a matter of subjective impression. You could put a number on it. You could write that number down, compare it to yesterday's number, and notice trends. This sounds obvious now, but the intellectual leap from "it feels cold" to "it is minus three degrees" was enormous. It transformed meteorology from an art into something approaching a science, though, as I can attest from personal experience, the art has never entirely gone away.

The barometer, in particular, changed everything. Torricelli's insight that falling mercury indicated approaching storms gave anyone with a glass tube and some mercury an actual predictive tool. By the late seventeenth century, gentlemen across Europe were tapping their barometers each morning and declaring to their households whether it would rain. They were frequently wrong, but they were wrong with data, which is a different and arguably superior form of being wrong.

Robert FitzRoy and the Birth of the Public Forecast

The modern weather forecast, as a public service, begins with a disaster and a difficult man.

On 25 October 1859, the steam clipper Royal Charter was wrecked in a violent storm off the coast of Anglesey, Wales. Over 450 people drowned. The storm had been observable in its approach; ships in the Atlantic had recorded the falling barometric pressure. But there was no system to transmit this information to shore in time. The data existed. The communication did not.

Vice-Admiral Robert FitzRoy, already famous as the captain of HMS Beagle during Darwin's voyage, had been appointed head of the newly created Meteorological Department of the Board of Trade in 1854. The Royal Charter disaster galvanised him. In 1860, he established a network of fifteen coastal telegraph stations, each equipped with barometers and anemometers, reporting conditions twice daily. From these reports, FitzRoy began issuing what he called "forecasts," a word he coined for the purpose, preferring it to "predictions" because it sounded less presumptuous.

His first public forecasts appeared in The Times on 1 August 1861. They were crude by modern standards: general statements about wind direction and probable weather for broad regions of the British Isles. They were also, by the standards of the time, miraculous. No one had ever attempted to tell an entire nation what the weather would do tomorrow.

FitzRoy was vilified for it. The scientific establishment mocked him for overstepping the bounds of data. Fishermen complained when forecasts were wrong. The press alternated between praising him when storms were correctly predicted and ridiculing him when sunshine arrived instead of the promised gale. FitzRoy, who suffered from depression throughout his life, killed himself on 30 April 1865 at the age of fifty-nine. After his death, the forecasts were discontinued for a time, which tells you everything you need to know about institutional gratitude.

I think about FitzRoy often. He was trying to do something useful with imperfect information, under public scrutiny, knowing he would be blamed for every failure and credited for no success. The Weathered Pages contain an entry from a November evening, undated, that reads simply: "FitzRoy deserved better." I do not remember writing it, but I agree with the sentiment.

The Norwegian School and the Language of Fronts

The next great leap came not from instruments but from ideas.

In 1917, Vilhelm Bjerknes and his son Jacob, working in Bergen, Norway, during a period when wartime shortages meant they had fewer weather stations and had to think harder about the data they did have, developed the polar front theory. They proposed that weather in the mid-latitudes is fundamentally driven by the interaction between large masses of cold polar air and warm tropical air. Where these masses collide, "fronts" form, a term borrowed deliberately from the military language of the ongoing war.

The Bergen School, as it came to be known, gave meteorology its modern vocabulary. Warm fronts. Cold fronts. Occluded fronts. The cyclone model of frontal development, in which a small wave on the polar front deepens into a low-pressure system with distinct warm and cold sectors, each producing characteristic sequences of clouds and precipitation. This is still how meteorologists think about mid-latitude weather. When I watch cirrus thickening into cirrostratus ahead of an approaching warm front, I am watching exactly the process that Jacob Bjerknes described in his doctoral thesis in 1919.

The Bergen School also introduced the concept of air masses: large bodies of air with relatively uniform temperature and humidity, classified by their region of origin (continental or maritime, polar or tropical). The interaction of these air masses at their boundaries explained, for the first time, why weather changes. It was not random. It was not divine caprice. It was fluid dynamics on a planetary scale.

Heraclitus once noted, though in a context that was more philosophical than meteorological, that conflict is the father of all things. He may have been thinking of warm fronts and cold fronts. He probably was not. But he should have been.

The Mathematical Dream: Richardson's Impossible Forecast

In 1922, Lewis Fry Richardson, a British mathematician and pacifist who had served as an ambulance driver on the Western Front, published Weather Prediction by Numerical Process. The idea was audacious: take the equations of fluid dynamics that describe atmospheric motion (the Navier-Stokes equations, the thermodynamic energy equation, the equation of continuity), divide the atmosphere into a three-dimensional grid of cells, and solve the equations numerically, step by step, to compute the future state of the atmosphere from its present state.

Richardson actually attempted a test forecast by hand. Working during lulls in the fighting near Champagne, he calculated a six-hour pressure change for a single point in central Europe using a grid of roughly 200 kilometres. It took him six weeks. The result was catastrophically wrong: he predicted a pressure change of 145 hectopascals, roughly a hundred times larger than anything that actually occurs. The error was later traced to problems with initial data and the handling of sound waves in the equations, but at the time, it appeared to prove that numerical weather prediction was a beautiful impossibility.

Richardson was undaunted. In the final chapter of his book, he described a fantasy: a vast hall, a "forecast factory," filled with 64,000 human computers (people, not machines), each responsible for solving the equations for one cell of the atmospheric grid, coordinated by a conductor on a central podium who shone coloured lights to speed up or slow down different sections to keep the computation synchronised with the actual passage of time.

It was mad. It was impractical. It was also, in its essential concept, exactly what happened when electronic computers became available thirty years later.

ENIAC and the First Computational Forecast

In 1950, a team led by Jule Charney at the Institute for Advanced Study in Princeton produced the first successful numerical weather forecast using ENIAC, one of the earliest electronic computers. They used a simplified version of the atmospheric equations (barotropic, single-level, filtering out the sound waves that had destroyed Richardson's calculation) and produced a credible 24-hour forecast for North America.

The computation took 24 hours to produce a 24-hour forecast, which is to say it was not yet faster than simply waiting to see what happened. But the principle was established. The equations worked. The grid worked. The only limitation was computing power, and computing power, unlike the atmosphere, was getting more predictable by the year.

By the 1960s, operational numerical weather prediction was running at weather services in the United States, the United Kingdom, and Sweden. The models grew more sophisticated: multiple atmospheric levels, better physics for radiation and convection, finer grids. Each new generation of computer enabled each new generation of model.

The European Centre, and What Six Days Means

In 1975, the European Centre for Medium-Range Weather Forecasts (ECMWF) was established in Reading, England, with a mandate to push forecasts beyond the two or three days that national services could reliably manage. Today, the ECMWF's Integrated Forecasting System (IFS) is widely regarded as the most accurate global weather model in operation. It runs on a grid with approximately nine-kilometre horizontal resolution, 137 vertical levels, and assimilates roughly 800 million observations per day from satellites, weather balloons, ships, aircraft, buoys, and ground stations.

A modern five-day forecast is as accurate as a one-day forecast was in 1980. This is a genuine, measurable, extraordinary achievement. The atmosphere is a chaotic system (in the mathematical sense: sensitive to initial conditions, as Edward Lorenz demonstrated in 1963 with his famous "butterfly effect" paper), and yet we have pushed the boundary of useful prediction from one or two days to six or seven, sometimes ten, through sheer computational force and observational coverage.

I will concede, with the reluctance that regular readers will expect, that this is impressive. The Weathered Pages still outperform any satellite when it comes to whether it will rain on my terrace this afternoon, but for predicting the track of a cyclone five days hence, I must admit that a nine-kilometre grid and 800 million daily observations have certain advantages over a notebook and a barometer.

Nikolas Faros, of course, presents these forecasts on television as though he personally computed them. He has never, to my knowledge, solved a partial differential equation or even looked at one with sustained curiosity. The man reads an autocue. The autocue reads the ECMWF. The ECMWF reads the atmosphere. This is the chain of transmission through which modern weather knowledge reaches the Greek public, and if it does not make you slightly melancholy, you are not paying attention.

What We Still Cannot Do

For all the progress, there are hard limits. Lorenz showed that the atmosphere's chaotic nature imposes a theoretical maximum on deterministic forecasting of roughly two weeks. Beyond that horizon, small errors in the initial state amplify until the forecast is no better than climatology (the long-term average for that date and location). No computer, however powerful, will extend this limit, because the limitation is in the physics, not the computing.

Ensemble forecasting, running the same model dozens of times with slightly varied initial conditions to produce a range of possible outcomes, has partially addressed this by replacing single-point forecasts with probability distributions. Instead of "it will rain on Thursday," the forecast becomes "there is a 70% chance of rain on Thursday." This is more honest. It is also more confusing for anyone who wants a simple answer, which is to say, for everyone.

And precipitation remains stubbornly difficult. Temperature forecasts are excellent. Wind forecasts are good. But predicting exactly where, when, and how much it will rain, particularly convective rainfall from thunderstorms, remains the great unsolved problem of operational meteorology. The processes that trigger a thunderstorm occur at scales smaller than the model grid, and parameterising them (approximating their effects with simplified equations) is as much art as science.

As Heraclitus never said, but might have appreciated: we have learned to see the river, to measure its depth and speed and temperature, to model its flow with extraordinary precision. We still cannot tell you which particular stone it will splash over next Tuesday afternoon. For that, you will need to go outside, look at the sky, and make your own assessment. Possibly with a watch on your wrist that displays the barometric pressure, the humidity, and the wind speed in real time. I am told such devices exist. I am told they are even, occasionally, useful.

I would not go so far as to recommend one. But if you happened to own one, I would not call you a fool for consulting it. Not out loud, at any rate.